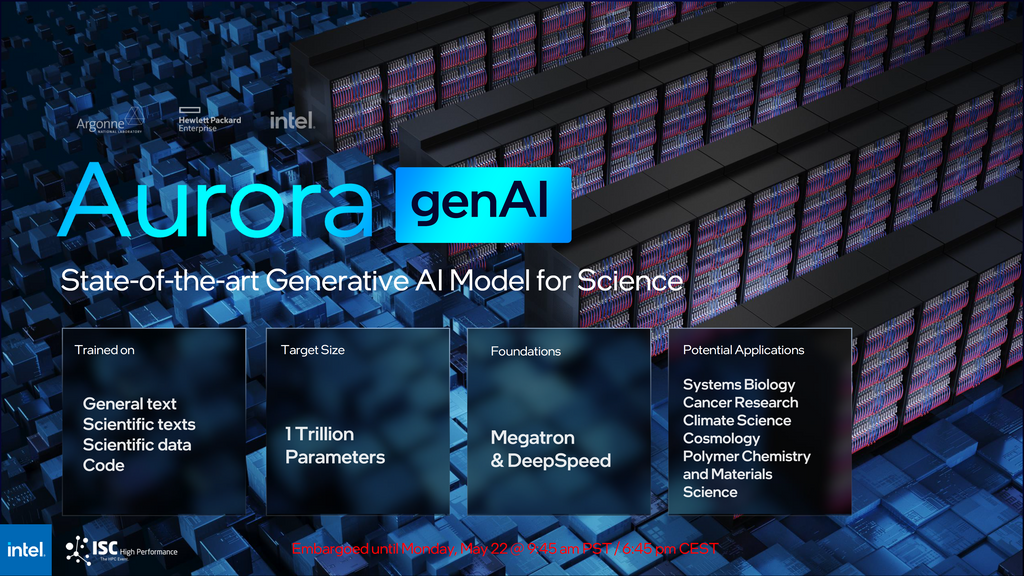

The 2 Exaflops Aurora supercomputer is a beast of a machine and the system will be used to power the Aurora genAI AI model.

Announced at today's ISC23 keynote, the Aurora genAI model is going to be trained on General text, Scientific texts, Scientific data, and codes related to the domain.

This is going to be a purely science-focused generative AI model with potential applications being Systems Biology

-

Cancer Research

-

Climate Science

-

Cosmology

-

Polymer Chemistry and Materials

-

Science

The foundations of the Intel Aurora genAI model are Megatron and DeepSpeed. Most importantly, the target size for the new model is 1 trillion parameters. Meanwhile, the target size for the free & public versions of ChatGPT is just 175 million in comparison.

That's a 5.7x increase in number of the parameters.

“The project aims to leverage the full potential of the Aurora supercomputer to produce a resource that can be used for downstream science at the Department of Energy labs and in collaboration with others,” said Rick Stevens, Argonne associate laboratory director.

These generative AI models for science will be trained on general text, code, scientific texts, and structured scientific data from biology, chemistry, materials science, physics, medicine, and other sources.

The resulting models (with as many as 1 trillion parameters) will be used in a variety of scientific applications, from the design of molecules and materials to the synthesis of knowledge across millions of sources to suggest new and interesting experiments in systems biology, polymer chemistry and energy materials, climate science, and cosmology.

The model will also be used to accelerate the identification of biological processes related to cancer and other diseases and suggest targets for drug design.