Synthetic Minds RSS

The neuroscience of lies, honesty, and self-control with Robert Sapolsky

Can we condition ourselves to become heroes? Neuroscientist Robert Sapolsky on the science of temptation, and the limitations of your brain’s frontal cortex. Ever hear the expression “it’s all in your mind”? According to Robert Sapolsky all the negativity in the world might all be coming from one part of the brain: the frontal cortex. The science of temptation runs parallel to the science of why people make “bad” decisions. Sapolsky explains how active the frontal cortex can be in some people when they have the opportunity to do a bad thing… and how calm it can be in...

Maintaining "standards of evidence" is the most important and least appreciated idea in science.

We live in a world dominated by science, but most people don't understand its most essential characteristic: establishing standards of evidence to keep us from getting fooled by our own biases and opinions. KEY TAKEAWAYS Maintaining standards of evidence is the most important and least appreciated idea in science. Modern science was established in the late Renaissance when networks of researchers began working out best practices for linking evidence with conclusions. In the face of science denial and attempts to create a post-truth society, we have to protect the primacy of standards of evidence in science and society. Adam FrankI...

Now Microsoft has a new AI model - Kosmos-1

Microsoft's Kosmos-1 can take image and audio prompts, paving the way for the next stage beyond ChatGPT's text prompts Microsoft has unveiled Kosmos-1, which it describes as a multimodal large language model (MLLM) that can not only respond to language prompts but also visual cues, which can be used for an array of tasks, including image captioning, visual question answering, and more. OpenAI's ChatGPT has helped popularize the concept of LLMs, such as the GPT (Generative Pre-trained Transformer) model, and the possibility of transforming a text prompt or input into an output. While people are impressed by these chat capabilities,...

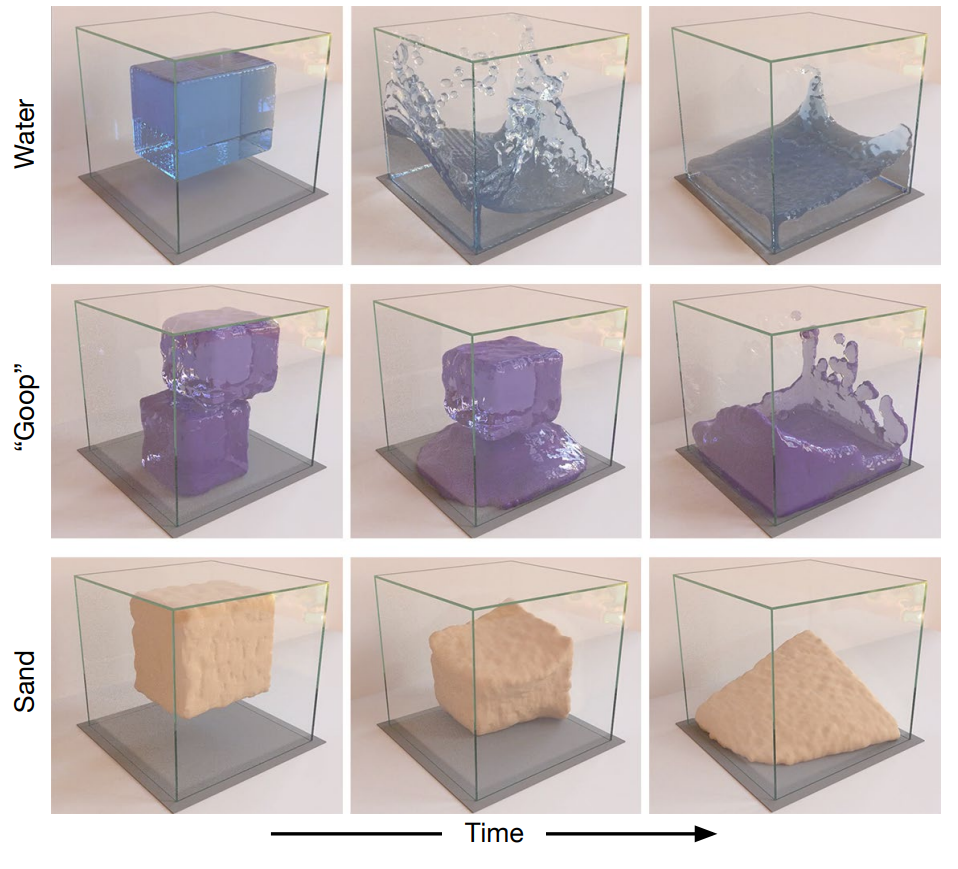

Learning to Simulate Complex Physics with Graph Networks

How Well Can DeepMind's AI Learn Physics? Our framework---which we term "Graph Network-based Simulators" (GNS)---represents the state of a physical system with particles, expressed as nodes in a graph, and computes dynamics via learned message-passing. Learning to Simulate Complex Physics with Graph Networks Here we present a machine learning framework and model implementation that can learn to simulate a wide variety of challenging physical domains, involving fluids, rigid solids, and deformable materials interacting with one another. Our results show that our model can generalize from single-timestep predictions with thousands of particles during training, to different initial conditions, thousands...

Tags

- All

- AGI

- AI

- AI Factories

- AI Models

- Artificial Cognition

- Autism Spectrum

- Avatars

- Azure Open AI

- Bias Compensation

- cobots

- Cognition Enhancement

- Cognitive Bias

- DALL-E 2

- Deep Thought

- Digital Minds

- Ethical AI

- Evolutionary Psychology

- Finance

- Gad Saad

- Generative AI

- GPT-4

- GPT-5

- Ignite 2022

- Infrastructure

- John Koetsier

- Kosmos-1

- Lex Fridman

- Malevolent Minds

- Microsoft Copilot

- Microsoft Power Virtual Agents

- Multimodal Large Language Model

- Neil deGrasse Tyson

- Pre-Singularity

- Real-Time Inferencing

- Sabine Hossenfelder

- Sam Harris

- Scalability

- Science

- Singularity

- Singularity Philosophy

- Singularity Ready

- Social Robots

- Storytelling

- Synthetic Minds

- Tesla